Publishing our Maize Row Navigation Algorithm

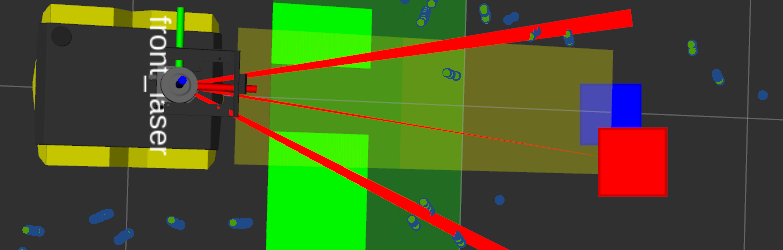

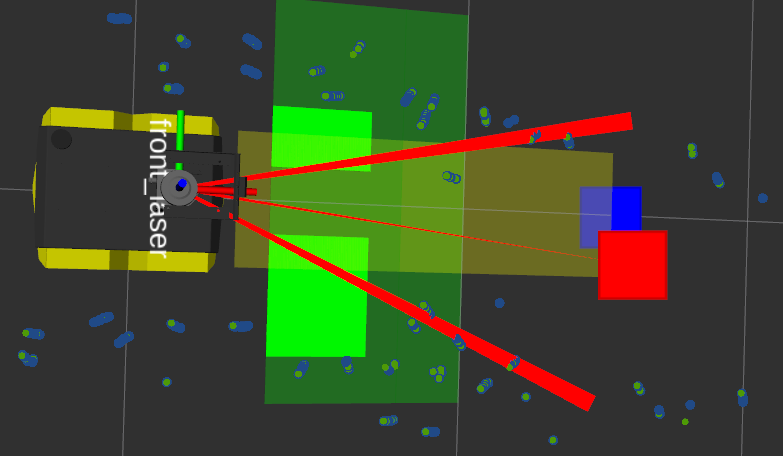

Following the release of our object detection deep learning code, model and dataset, we are now publishing the code we used for navigating within a crop row at the Field Robot Event. This code has now been in use at Kamaro for several years, and most recently won Task 1 at the competition in 2021.

A detailed description of the algorithm is available in the Readme file on Github, and in the (as of yet unpublished) Proceedings of FRE 2021.

While we previously used this algorithm on a robot with two steerable axes Beteigeuze, the published version uses standard ROS Twist messages, compatible with any differentially steered robot (such as the Jackal robot used at this year’s virtual FRE, or our own Dschubba.

Many Kamaro members have worked on this project over the years. We thank all of them for their contribution!

Keine Kommentare