Kamaro wins sensing Task at FRE 2023

At this year’s Field Robot Event at the University of Maribor in Slovenia, our robot impressively outperformed 14 other competitors in the computer vision-focused Task 3. The task determined the accuracy of the competing robots‘ recognition systems on a series of images of people, deer, and other objects. We also took 2nd place in the Freestyle category and 4th place in the Smart Irrigation task.

We would like to thank our sponsors who supported our participation in the competition with financial resources and hardware. For a deep dive into our methodology, we will soon publish our FRE 2023 proceedings on this blog.

Publishing the "Carbonaro" dataset from FRE 2022

Last year, we published our dataset and models for image recognition during the online Field Robot Event 2021. Our blog post ended with a call for cooperation in creating a dataset and AI models for this year’s event, which has since taken place at DLG Feldtage in June. Team Carbonite from the Überlingen Students’ Research Center (SFZ Überlingen) approached and we subsequently worked together on building a realistic image dataset. As a nod to the two teamnames, this dataset shall henceforth be known by the name “Carbonaro”.

Publishing our Maize Row Navigation Algorithm

Following the release of our object detection deep learning code, model and dataset, we are now publishing the code we used for navigating within a crop row at the Field Robot Event. This code has now been in use at Kamaro for several years, and most recently won Task 1 at the competition in 2021. A detailed description of the algorithm is available in the Readme file on Github and in the Proceedings of FRE 2021.

Publishing our Object Detection Network and Dataset

FRE 2021 is over! It’s been a great week seeing all of the other teams again and competing with one another. We are grateful for the opportunity we had in building the competition environment and shaping this year’s Field Robot Event!

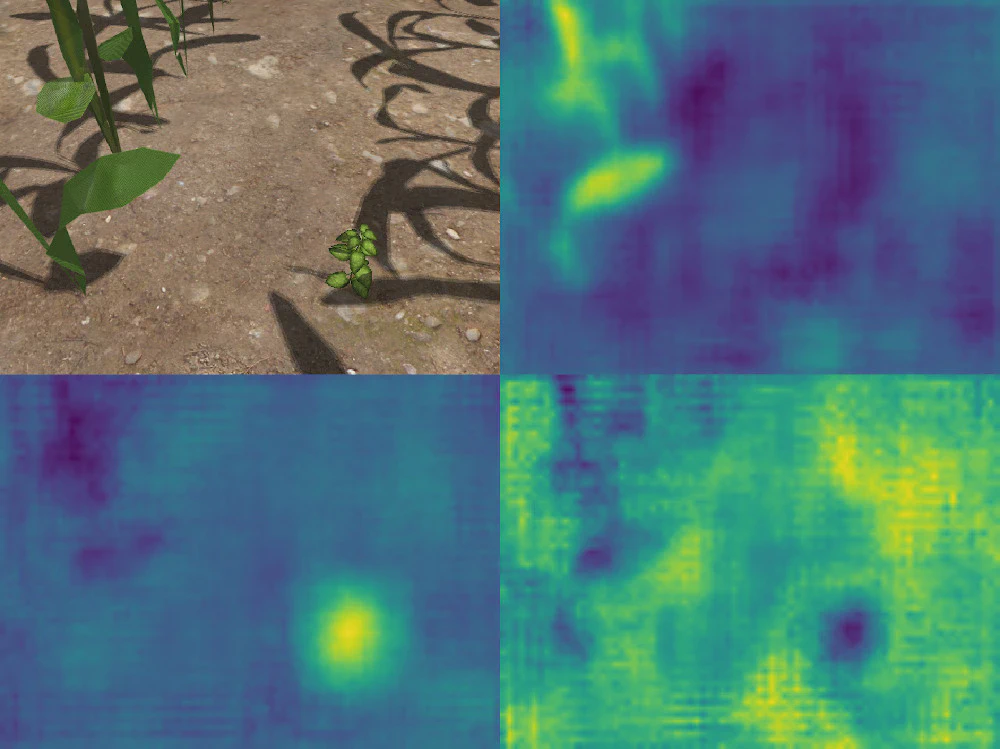

We were especially pleased with the organizers’ decision to include realistic 3D plant models for the weed detection task. For a long time, simple color-based detectors were sufficient to solve the detection tasks at the Field Robot Event. However, such approaches are not adequate to perform a plant recognition task in a realistic scenario. On the real field, robots will have to distinguish between multiple species of plants — all of which are green — in order to perform weeding, phenotyping and other tasks. Deep learning has been around for a few years now and seems to be the most applicable tool to tackle this challenge.

Precise localisation with loop closures using graph SLAM

The graph SLAM (Simultaneous Localisation And Mapping) algorithm plays a key role in our attempts to navigate autonomously in complex environments.

Take an in-depth look at how it works.

Why graph SLAM is important? How does it roughly work? What are loop closures and why you need them? That and more is answered here with open source code.